This is just too good not to share.

Pages

Monday, October 31, 2011

Thursday, October 27, 2011

Week 11: Experiment and Explanation

After immersing ourselves in certain, messy social aspects of science, it’s time to return to the some of the questions about evidence for our theories that crop up at an individual level. For the next few weeks, we’ll descend back to the individual level — or anyway the level at which sociological factors drop out.

This week we tackle two very different concepts that are central to science: experimentation and explanation. Next week, we try to leverage explanatory considerations to solve (or at least make progress on) the problem of induction. After that, we’ll address a perennial concern for philosophy of science: the realism/anti-realism debate, drawing on lots of the background you’ve been acquiring. So much for foreshadowing. To the work at hand. . . .

Start with experiment. Do we even have a clear idea of what it is? How, for instance, does it differ from simple observation. (Of course, as we’ve already seen — both from reading French and Feyerabend —, observation isn’t nearly as simple as we’re often inclined to suppose.) How should experiments fit in with theories? Such questions are important not just theoretically, but (as O’Malley et al. argue) practically for how science is performed and funded. Their paper — published in a high-profile biology journal — examines some of the statements of funding agencies like the NSF and NIH to see how well they fit into our best understanding of how science works.

Our topic for Thursday will be explanation. What is it to explain something? I take it that we are often fairly good at offering and evaluating explanations. But once again we run into the problem of not being very good at describing what it is we’re doing. Strevens’ paper surveys some of the most popular and important accounts of scientific explanation (and the problems that they face). Though his paper doesn’t mention this specifically, you might think about the methodology of investigating these various models. How exactly are we (and should we) approach the question of whether an account of explanation is adequate?

Tuesday (11/1): Experiment & Models

• French, Ch. 6 (you might wish to review Ch. 5 as well)

• Hacking, “Experiment” (Chapter 9 of Representing and Intervening) [PDF]*

• O’Malley et al. “Philosophies of Funding” [PDF]

Questions: (respond to one)

- What is the difference between observation and experimentation? Describe as clearly and neutrally as you can (i.e., see if you can avoid using those words to explain the difference). Is there a clean division between the two?

- What do you think of Hacking’s view that phenomena are “created” by experiment — that they are, in a sense, artifacts of our technology? What would the consequences of this claim be, if true?

- Say something about how your think models fit into science. You might want to think back to the Oreskes/Conway reading.

- How do Hacking’s insights play a role in the O’Malley et al. paper?

Thursday (11/3): Explanation

• Strevens, “Scientific Explanation” [PDF] (you may skim the sections on the IS account and the Statistical Relevance account — I won’t address these in class unless someone specifically wants to).

• French, pp. 98–99 briefly considers explanation: you might wish to look this over at this point too (it’s in Ch. 7 on Realism, which we’ll read in a few classes).

Questions: (respond to one)

- Read Study Exercise 2 in French (p. 88). Address the questions: Do you think it’s possible to identify the cause of the crash? and Do you think scientists face a similar sort of situation when they try to explain some phenomenon?

- See if you can come up with a different example along the lines of the flagpole and storm examples that illustrates a problem for the DN account of explanation.

- Between the Unification and Causal approaches to explanation, what appears to you to be the most appealing and why?

- Address the methodological question I broached above.

Wednesday, October 26, 2011

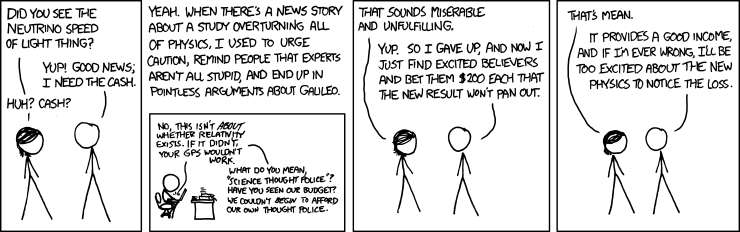

Superluminal Neutrinos

I mentioned this case about the OPERA neutrinos experiment yesterday in class. Here's the original news report from Nature. It seems to me revealing of a lot of different ideas we talked about: most clearly, the resilience of well established theories being resilient in the face of apparent falsification. Think of how many people reacted to the apparent falsification of special relativity with a "ho hum: there must be something wrong with the experiment or their calculations" (without even knowing anything about the experiment). (Compare our reaction to the "pigheaded" opponents of Galileo.)

I mentioned this case about the OPERA neutrinos experiment yesterday in class. Here's the original news report from Nature. It seems to me revealing of a lot of different ideas we talked about: most clearly, the resilience of well established theories being resilient in the face of apparent falsification. Think of how many people reacted to the apparent falsification of special relativity with a "ho hum: there must be something wrong with the experiment or their calculations" (without even knowing anything about the experiment). (Compare our reaction to the "pigheaded" opponents of Galileo.)One of the most telling problems with the hypothesis that neutrinos go faster than light is that we would have noticed this before. From the news report above:

"Ellis, however, remains sceptical. Many experiments have looked for particles travelling faster than light speed in the past and have come up empty-handed, he says. Most troubling for OPERA is a separate analysis of a pulse of neutrinos from a nearby supernova known as 1987a. If the speeds seen by OPERA were achievable by all neutrinos, then the pulse from the supernova would have shown up years earlier than the exploding star's flash of light; instead, they arrived within hours of each other. "It's difficult to reconcile with what OPERA is seeing," Ellis says."Follow-up reports offer some interesting suggestions about how the study might have gone wrong:

• "Faster-than-light neutrinos face time trial" (Nature)

• "Finding puts brakes on faster-than-light neutrinos" (Nature)

• "Faster-than-Light Neutrino Puzzle Claimed Solved by Special Relativity" (Technology Review)

• "Particles Faster Than the Speed of Light? Not So Fast, Some Say" (New York Times)

The comments on many of these posts are sociologically interesting too (I tell myself never to read comments on blogs/news sites, lest I lose faith in humanity; but sometimes I can't resist).

Note one extremely interesting aspect of many of these explanations: what is doing the explaining away of the OPERA results? Relativity. What is the theory that would be "falsified" by the OPERA results? Relativity. So relativity is apparently testifying on its own behalf! On its face, this looks like a problem. Is it? (You can replace a SWA question with your answer.)

Here's XKCD's suggestion of how to monetize skepticism about skepticism:

Also: check out the abstract to this article: "Can apparent superluminal neutrino speeds be explained as a quantum weak measurement?". It has my vote for best abstract to an academic paper for 2011.

Tuesday, October 25, 2011

Public Perception of Science v. Reality

This has been making the rounds on my (science-nerd-infested) FB wall. Seems about right. . . . (On the individual level, anyway.)

Friday, October 21, 2011

Quantum Trapping

Well, this is pretty cool, to say the least. <heh> Hopefully it's not a cruel hoax. . . .

Second Essay Assingment

You may now find the assignment for your second essay — due November 8th — on Moodle here. Note that I am asking you to write a different sort of essay and will accordingly be evaluating it on the basis of a different rubric. However, you should still take heed of the qualities I singled out in the previous rubric. And I especially recommend taking note of my Writing Advice, which is geared towards essays of this broad type, and the two Sample Essays (1 and 2) I posted in Moodle.

As I say (is there an echo here?), the key to good — or even competent — writing is effort and revision. Give yourself enough time to let the ideas percolate, but make sure you go through several drafts. Feel free to come chat with me about ideas, arguments, or drafts.

As I say (is there an echo here?), the key to good — or even competent — writing is effort and revision. Give yourself enough time to let the ideas percolate, but make sure you go through several drafts. Feel free to come chat with me about ideas, arguments, or drafts.

Thursday, October 20, 2011

Week 10: Science in a (Messy) Social Context

We've been discussing some of the more overtly "social" aspects of scientific investigation of late via Feyerabend. And while I take it that he offered us many interesting and important insights, there is the strong sense that his medicine is rather strong. However he is working in an important tradition within philosophy of science: attempting to understand the sociology of science. Science is, after all, a particular human activity — something that we often do in groups — and thus potentially subject to social and psychological forces of which we may be only dimly aware.

This week, we'll examine different facets of these social factors. Prior to Feyerabend, our focus on confirmation theory was primarily individualistic. An individual scientist (or research group — a functional individual, in a sense) working on a particular problem proposes a hypothesis, makes relevant observations, does experiments, &c., that either raise or lower their confidence that the hypothesis is true. Suppose that our individual scientist’s confidence in the hypothesis is raised quite a bit: they come to (tentatively) accept the hypothesis as true. What then? Does the result become “scientific knowledge”?

That depends, at minimum, on its being accepted by a large portion of the wider scientific community. But in order for the result to even get heard be that community, it must cross an important gateway: peer-review. (Recall that this gateway has already made an appearance in this course: I insisted that your essays only draw from peer-reviewed sources.) In order for a result to be published, it must pass the scrutiny of other experts in the field, asking questions like “was the methodology used appropriate?”, “Were the assumptions reasonable?”, “Were the relevant calculations performed correctly?”, and so on. Inasmuch as these checks rule out obvious sources of error, it seems that passing this scrutiny ought to increase our confidence that a given paper’s conclusions are correct.

On Tuesday, we'll talk about some recent reflections on a biasing effect in peer-review that suggests that we shouldn’t be nearly as confident about our research results as we tend to be. On Thursday, we will look at an interesting case study for scientific norms revolving around peer-review, bias, and propaganda: the debate about SDI and Nuclear Winter.

Tuesday (10/25): Collective Research Effort and its Foibles

• Ioannidis, “Why Most Published Research Findings Are False” [Journal Link]*

• Lehrer, “The Truth Wears Off” [PDF]

• Schooler, “Unpublished results hide the decline effect” [Journal Link]

Questions: (respond to two)

- On its face, Ioannidis's claim is quite bold. Do you think he succeeds in making his case?*

- There are at least two different interpretations of statements of the “Decline Effect” (e.g., “our facts were losing their truth”, “the effects appeared to be wearing off”, and so on); carefully distinguish between them.

- Why does regression to the mean provide a more satisfying explanation for the decline effect than the hypothesis that certain effects are simply declining? Do you suppose that the subject matter of Schooler’s investigation (precognition) has anything to do with the plausibility of this suggestion or can it be made independently of the particulars of that experiment?

- Does the decline effect offer us a skeptical argument about science comparable to Hume’s argument about induction?

- Reflect on the relevance of Publication Bias for the competing theories of Popper and Feyerabend.

Thursday (10/27): Case Study: The “Star Wars” Defense Project & Nuclear Winter

• Oreskes & Conway, “Strategic Defense, Phony Facts, and the Creation of the George C. Marshall Institute” [PDF]

Questions: (respond to one)

- In what ways does it seem appropriate to think of strategic investigations as (analogous to) “scientific” investigations? Does the obvious phenomena of bias in the former suggest anything about the potential for bias in the latter?

- What can we say about the testability of SDI? Was it straightforwardly “untestable” or is there a way of nuancing our understanding of testability?

- How do you suppose Feyerabend might react to the whole SDI-Nuclear Winter affair?

- What do you make of the controversy over Sagan’s publications in Parade and Foreign Affairs prior to the peer-reviewed publication of the TTAPS paper? Did Sagan have a duty to publish or a duty not to publish?

- What is the Fairness Doctrine? Comment on its relevance to scientific and policy research.

- What do you think of Oreskes and Conway’s analysis of Seitz’s critique of the Nuclear Winter hypothesis?

Tuesday, October 18, 2011

Further Reflections on Induction

Since a few confusions about the problem of induction persist — and the topic isn’t quite ready to go away — I thought I’d attempt some clarification. I’ll proceed in the time-honored form of an FAQ sheet. This isn’t meant to be exhaustive: I suggest circling back to the relevant Foster, Lipton, and Popper readings for further details. However, I’d be very happy to answer other questions you think should be included here (feel free to leave a comment or shoot me an email). And as usual, you’re welcome to join me in my office hours (or another time) to clarify any lingering puzzlement.

What is induction?

Broadly speaking, induction is a non-deductive form of inference. People often have specific ideas about what sorts of inferences count as induction. For example, they may say that inductive inference proceeds from specific to general — while deductive arguments proceed from general to specific. Thus “All men are mortal; Socrates is a man; therefore, Socrates is mortal” is a classic deductive argument, while “This man is mortal; this other man is moral; that guy’s mortal, . . . ; therefore, all men are moral” is an exemplary inductive argument.

Broadly speaking, induction is a non-deductive form of inference. People often have specific ideas about what sorts of inferences count as induction. For example, they may say that inductive inference proceeds from specific to general — while deductive arguments proceed from general to specific. Thus “All men are mortal; Socrates is a man; therefore, Socrates is mortal” is a classic deductive argument, while “This man is mortal; this other man is moral; that guy’s mortal, . . . ; therefore, all men are moral” is an exemplary inductive argument.

However, a little reflection shows that this isn’t that great of a characterization — for either inductive or deductive arguments. Deductive arguments come in all shapes and sizes. Here’s one: “Professor Bermudez is either in his office or meeting with the dean; he’s not in his office, so he must be meeting with the dean.” Shoehorning this argument into the “general-to-specific” motto doesn’t seem right. We can find similar mismatches among inductive arguments. For example, consider one of the pieces of evidence for the Big Bang: “everywhere in the universe we look, there’s this hiss of background radiation; such a background would be nicely explained by the universe’s coming to be in a ‘Big Bang’; so therefore (probably) the big bang model of the universe’s origin is true.” We’ll look at arguments like this — sometimes called ‘abductive’ arguments or ‘inference to the best explanation’ — in more detail in a few weeks. If anything, we’re going from general to particular there, yet the argument is clearly supposed to be inductive in our broad sense. This example also demonstrates the inadequacy of another popular characterization of induction: that it is about prediction of the future events (or future observations). The arguments for the occurrence of a Big Bang are obviously not forward-looking. Science is about more than prediction: it is about finding out what happened and explaining it.

You said that the inference to the best explanation above was clearly inductive? Why? Because the argument is not deductively valid?

Good question! Though it’s true that the argument is not deductively valid (the conclusion doesn’t follow from the premises as a matter of logic alone: we can imagine the premises about the background radiation being true but the Big Bang theory being false), this fact alone isn’t enough to make the argument inductive. We wouldn’t want to say that deductive arguments are necessarily valid: that is the standard to which deductive arguments “strive”, but there are plenty of invalid deductive arguments. For example: “If Professor Bermudez in his office, then he’s working; he’s not in his office; therefore, he’s not working.” The conclusion doesn’t follow from the premises in this case: it’s possible that Bermudez is working elsewhere. Thinking that this argument form is valid is a common enough mistake that it has its own name: ‘the fallacy of denying the antecedent’. What makes this argument deductive rather than inductive. The best answer has to do with the standard of evaluation that is likely intended by the arguer.

So induction is a weaker, less demanding standard than deduction?

That’s a somewhat misleading way of putting it. Induction and deduction are simply different standards. In the case of deductive arguments, we can tell whether they are valid by more or less algorithmic means. That’s because validity has to do with logical form (roughly, the grammatical structure) of an argument rather than its content. (This is why it’s often called ‘formal logic’ — not because it dresses up nicely.) But the level of certainty that this standard gives us comes at a price: triviality. There’s a sense that we don’t really learn much when we derive the conclusion of a deductively valid argument from its premises. We might not have worked it out, but the information was (in a sense) already there, buried, as it were, in the form of the premises. That shows us that deduction alone won’t be a good way of expanding our knowledge. Inductive arguments, on the other hand, purport to do this. That is why some call inductive inference “ampliative”.

But how can induction “amplify” our knowledge if the conclusions of inductive arguments are underdetermined by our evidence?

It is important to realize that the fact of underdetermination alone should not scupper our confidence in induction. For example, when some people are first exposed to the problem of induction they seem a little too eager to relinquish claims about knowledge of the future. It seems to me that they are confusing knowledge and certainty. Fair enough: I cannot be certain that, stay, the sun will rise tomorrow. My evidence thus far underdetermines whether it will (perhaps a rogue star will sweep through our solar system and disrupt everything tonight!). But on the other hand, our evidence suggests pretty strongly that no such freakish occurrence will take place. Underdetermination alone should not get us to relinquish inductive inference. We use it all the time. It has been successful.

What is the justificatory problem of induction? Doesn’t it stem from underdetermination?

Underdetermination is only part of the story. Hume’s skeptical argument is roughly this: the fact that inductive inferences are underdetermined by evidence shows us that no deductive justification of the reliability of inductive inference will be forthcoming. But what’s the alternative? Induction!? If we say something like “when we’ve previously used induction, it’s shown itself to be more or less reliable”, we’re using induction. So if the reliability of induction is already an open question, we can’t use induction to defend its reliability. Otherwise, we reason in a circle — or “beg the question” —, taking for granted what is in question. Ditto for attempts to justify particular inductive arguments by adding premises about the “Uniformity of Nature”. This gambit is in even worse trouble than using induction to justify induction. For one, presumably, we’d need induction in order to show that nature is uniform, running into the same problem circularity problem. And for two, it looks pretty doubtful that we can put specific enough sense to the claim that nature is uniform in order to make it come out both true and useful. It’s either going to be true but too weak (e.g., “Nature is uniform for the most part, in certain respects”) or strong enough to be of some use, but false (e.g., “Nature is uniform in all respects relevant to induction”).

So does Hume’s skeptical argument show that induction is unreliable?

No. For one, just because he gives us an argument whose conclusion is that inductive inference cannot be justified, doesn’t mean that we ought to believe that conclusion. We might try to show where the argument goes wrong. Or we might try to finesse the issue in a less direct way. But anyway, even if we did accept the conclusion that we cannot justify our use of inductive inference — that, as Lipton puts it, there is a “deep symmetry” between induction and other “counterinductive” principles — we shouldn’t confuse this with the claim that induction is unreliable. Lipton’s analogy to lying is revealing here: if you are wondering whether I am honest, there’s not a lot that I can say that should help you decide. But importantly, this fact — that I cannot effectively testify to my own honesty — does not show that I am not honest.

How does the descriptive problem fit into this picture?

Here’s one way of thinking about this story: we have general reasons for being skeptical about the possibility of justifying inductive inference’s reliability. We seem forced by underdetermination to use induction to justify itself, but this has us committing the fallacy of arguing in a circle. Subtle minds begin to ask whether we haven’t been asking the wrong questions. Does inductive inference need to be justified? What exactly is it that we’re looking for here? Compare deduction: what justifies a particular rule of deduction? It seems unlikely that we’ll be able to say anything here without using deductive inference. [Hey, maybe we could argue inductively that deduction is reliable — homework (replace a question of your choosing in an SWA): can this idea go anywhere?] So perhaps we should do with induction what we’ve done with deduction: simply articulate the rules very carefully and follow them.

However, it turns out that this is easier to say than to do. Not too surprising: it’s often harder to describe what we have a knack for doing (playing a musical instrument, shooting a basket, cooking the perfect omelet, whatever). Problems like the Ravens Paradox, the Tacking Problem, and Goodman’s New Riddle offer further challenges to particular descriptions of how we in fact reason inductively. Notice that these problems do not call into question the ways in which we reason (suggesting that we shouldn’t reason in those ways, say). Instead, they cast doubt on the accuracy of those descriptions. The justification of our practices needn’t enter into it at this point.

Thursday, October 13, 2011

Ghosts and Hauntings

I think I mentioned this event in class, but I just got the email about it:

"Ghosts and Hauntings: Decide for Yourself"

Lecture by Rich Robbins, Associate Dean

October 24th at 7PM in Trout Auditorium

Seems like this would be an . . . interesting case study for scientific methodology. Go and report back, eh?

Tuesday, October 11, 2011

First Essays

I'm nearly done grading your first essays. By tomorrow morning, I will send you each detailed comments via email. You should receive two attachments: a PDF of a completed rubric sheet and a Word document with comments and markup. (If you don't see anything on the latter, make sure that you're in the correct mode in Word: "Final showing markup".)

Technical details out of the way, let me tell you several things I know:

Technical details out of the way, let me tell you several things I know:

- Many (even most) of you are going to freak out a little when you see your rubric. Not that it'll probably help, but pretty much everyone was in the same boat. In fact, I was actually pleasantly surprised that you did this well on the first assignment (previous classes have certainly done worse).

- It may occur to you that I am being harsh or unfair (or . . . !). But hopefully you'll realize, on reflection, that I'm neither. In fact, I'm attempting to help you gain a valuable skill set by (a) holding you to high standards, (b) being honest with you about whether you are meeting those standards, and (c) offering as much help on paper and in person as it takes in order to get you there. I'm your advocate here. Better to learn now among friends (with a safety net) than in the so-called "real world" where the job market is fierce!

- You will put a lot more effort into your subsequent essays (or revisions) and by the end of the term produce work that is significantly better (gaining along the way a deep understanding of issues that you are only close to grasping now). You will look back on these first essays and be surprised that you wrote them only a few weeks before.

I know these things well enough by induction(!) over (suprisingly many) years of teaching students such as yourselves how to present and analyze complex and subtle ideas in writing. I structure my courses so that you will have ample opportunity to hone these capabilities without being punished for not being masters right out of the gates. Remember: of your two shorter essays, I will only count the best towards your final grade — and you may revise either of them.

So: try to avoid succumbing to Freakout (1) or Anger/Frustration (2) and instead focus on Improvement (3). Think about my comments, what you wrote, and how you can improve it. Look over my Writing Advice and linked resources again. Come chat with me about your understanding, your writing, your process, . . . whatever (you might also consider chatting with the folks at the Writing Center). Try revising your essay (even before you start the next one). Wash and repeat. This, unfortunately, is the only way to gain a skill like this. It's painful, messy, work-intensive, and often frustrating (I speak from experience on both sides of the table). But it is worth doing.

I have office hours tomorrow from 10AM–noon. As always, please feel welcome to stop by or arrange another time to come talk.

Saturday, October 8, 2011

Weeks 8–9: Is There a Scientific Method?

Let’s review. We’ve examined some reason for concern over the idea that there is such a thing as “The Scientific Method”. The worries take various forms. For one, it appears difficult either to independently justify or to describe how scientists behave in different circumstances — even when we get close to such descriptions, they seem to fall apart under the pressure of certain philosophical thought-experiments or counterexamples. For two, it’s not clear that any of the attempts to attribute a special “scientific status” to certain theories have been successful or rule out even creationism or astrology.

One natural response to the second set of worries is to admit that a demarcation between science and non-science is bound to be vague, but that there are still clear cases of each. Compare: ‘bald’ is a vague concept, but there are certainly clear cases of bald people and “thatched” people. It might be difficult to come up with a demarcation criterion that provides even a vague separation between science and non-science, but that doesn’t mean that it’s impossible. Perhaps there are no necessary and sufficient conditions for a theory’s being a science; but this doesn’t mean that there’s nothing to say about the matter. To repurpose an example of Ludwig Wittgenstein’s, it might be difficult to offer necessary and sufficient conditions for something’s being a game — it’s a “family resemblance concept” — but that hardly means that there are no games or that games aren’t in some way special.

This response goes hand-in-hand with a natural response to the first set of worries: “Okay, so we learn that describing and justifying scientific methods (induction, testing, &c.) is difficult, but that shouldn’t surprise us much. And even if scientists fall short of behaving in the simple ways described by our models of these methods, they still have value as regulative ideals. Science is a rational enterprise even if individual scientists might not be entirely rational.”

Here’s were we pick up the story with Paul Feyerabend, whose thought we will study for at least three meetings. Feyerabend is an iconoclast in the philosophy of science. He has sometimes been called an epistemological anarchist, since he claims that the only rule of scientific methodology that deserves any assent is “Anything goes!” Feyerabend thus offers a rejoinder to the idea of scientific method as a regulative ideal. Scientists employ propaganda to convince others; they cajole, connive, misrepresent, believe when they shouldn’t. . . . And (here’s the radical thought): this is more or less as it should be! So buckle up for some radical views of science.

Thursday (10/13): Feyerabend’s Epistemic Anarchy

• Feyerabend, Against Method: Introduction, Chs. 1–4

Tuesday (10/18): Observation (Case Study: The Telescope)

• Against Method: Chs. 5–10 (skim pp. 79–82)

• French, Ch. 5

Thursday (10/20): The Social Status of Science

• Against Method: Chs. 11, 13, 15, 19 (Optional recommended: Ch. 17)

— 1st Box Project Presentation

Questions: Since we’re reading an anarchist, I thought it’d be an appropriate change of place to go a little anarchic with your Short Writing Assignments (#7 and 8) for a spell. For the next three meetings (those listed above), there are no particular questions to answer. Write on whatever interests you about the reading. These may be questions, descriptions, responses, or other sorts of reflections. We’ll revert back to normalcy in Week 10.

Thursday, October 6, 2011

"Judgment Day" full video

As I mentioned in class today, you can watch the whole two-hour video about the Dover trial online here. If you're interested in more of the social (and even some of the scientific) background involved in the case, I encourage you to watch it.

As I mentioned in class today, you can watch the whole two-hour video about the Dover trial online here. If you're interested in more of the social (and even some of the scientific) background involved in the case, I encourage you to watch it.

Subscribe to:

Posts (Atom)